The deeper story — the one we are slower to tell, because it implicates more than our policies — is that we are in a metaphysical transition disguised as a technological one. The polycrisis we are living through is not a coincidence of independent emergencies. It is the gathering bill for several centuries of acting on a particular philosophical assumption: that the world is composed of separable parts, that minds are private theaters, that economies float free of ecologies, that the present is uncoupled from the future. We built our institutions on that assumption. We built our markets on it. And now we are building our most consequential cognitive infrastructure in human history — large-scale artificial intelligence — on it as well.

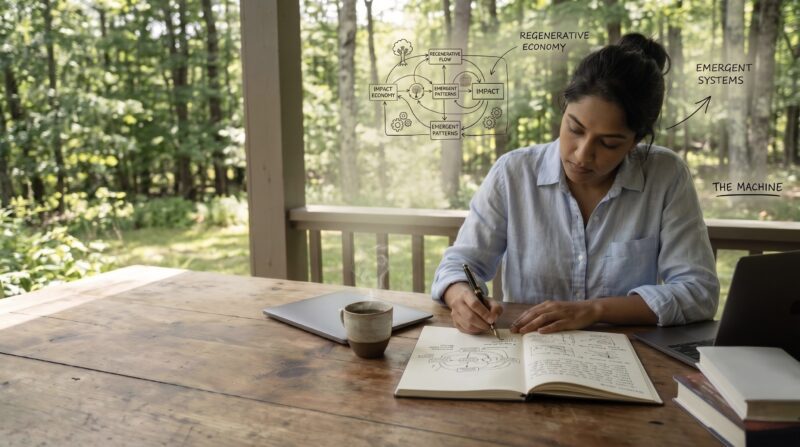

This essay is an argument that we should not. It is an argument that the regenerative economy and the future of artificial intelligence are not two separate problems to be solved sequentially, but a single problem with two faces. It is an argument that the impact economy, properly understood, has a stake in the design of these systems that the field has not yet fully claimed. And it is an argument for what I will call regenerative AI — not a product category, not a marketing term, but a stance toward the design, ownership, and governance of cognitive infrastructure that takes seriously what the older traditions and the newer sciences are both, in different ways, telling us: that we are not separate, that consequences are non-local, and that the unit of survival is the relationship.

I will not pretend this is a simple argument, or that I have all of it worked out. What follows is a sketch of a territory I think the impact economy needs to enter, and enter quickly, before the foundations of the next civilization’s mind get poured in someone else’s image.

The metaphysics in the machine

Let me begin where the conversation usually doesn’t, which is with the question of what artificial intelligence actually is.

The dominant framings get this wrong in revealing ways. Silicon Valley treats AI as a tool — a powerful one, but ontologically continuous with hammers and spreadsheets, an instrument awaiting a user’s intent. The doomer camp treats it as an emergent agent, a potential mind whose interests might one day diverge from ours. The marketing department treats it as an oracle, a personal assistant, a friend. Each of these framings carries fragments of truth, and each obscures the most important fact about the technology, which is structural rather than functional.

A large language model is, technically, a statistical compression of an enormous portion of recorded human cognition — billions of pages of text, code, conversation, image, and pattern, distilled into a substrate that can be queried and that responds. It is not a tool, exactly, and it is not a mind, exactly. It is something stranger and, I would argue, more interesting: a condensation of the cognitive commons, a partial materialization of the linguistic and conceptual layer of human collective intelligence, rendered into something we can interact with. It is closer in kind to a public reservoir than to a hammer, and closer to a library than to an assistant — though no library has ever spoken back, and no reservoir has ever been built quite like this one.

We are in a metaphysical transition disguised as a technological one.

This reframing changes the questions we should be asking. The first question is not is it conscious but whose minds are in there, under what terms, and who controls access. The second is not will it replace us but what does it mean that the cognitive infrastructure of the next civilization is being privately constructed from publicly given materials, mostly without consent, and concentrated in fewer hands than any infrastructure in human history. The third is not how do we make AI safe but whose ontology — whose theory of what is real and what matters — gets baked into the substrate that will, increasingly, mediate human thought itself.

That last question is the one this essay is about. Because the metaphysics in the machine is not neutral. It is a choice. And the choice currently being made — by default, by inertia, by the gravitational pull of venture capital and the quarterly return expectations that shape what gets built and what does not — is the same choice the modern world has been making for four hundred years: that reality is composed of separable parts, that value is what can be extracted from those parts, and that intelligence is what can be measured, owned, and scaled.

The regenerative economy is, at its deepest, a refusal of that choice. The question is whether we will let our most consequential new technology be built on the very ontology we have spent our careers learning to recognize as the cause of the crisis.

The ontology of separation, briefly

The metaphysical claim is doing real work in the argument, so a short detour is warranted.

The modern world inherited a particular picture of reality from Descartes, Newton, and the long Enlightenment. In this picture, the universe is composed of discrete objects in empty space, governed by mechanical laws, knowable through detached observation. Mind is private and located in skulls; matter is public and located in bodies. Causation is linear; consequences are local. The economy is a system of voluntary exchange between autonomous agents, each pursuing their separate interests. Nature is a backdrop and a resource. The future is somebody else’s problem.

AI is not a sector. It is the substrate on which most subsequent collective cognition will occur.

This picture has been astonishingly productive — and astonishingly destructive. The same ontology that gave us modern science and modern medicine also gave us climate breakdown, biodiversity collapse, the loneliness epidemic, and the financialization of nearly everything that once stood outside the market. This is not paradox; it is consequence. A worldview that treats the world as a collection of separable parts will, given enough time and leverage, separate everything from everything else, until the relationships that actually sustain life have been dismantled and sold for parts.

Large language models are not simply tools — they are condensations of a vast, shared cognitive inheritance. The question is whether this commons is governed as a resource for all or enclosed for the few.

Large language models are not simply tools — they are condensations of a vast, shared cognitive inheritance. The question is whether this commons is governed as a resource for all or enclosed for the few.

The traditions we marginalized while building this world — Indigenous epistemologies across continents, the contemplative lineages of every major spiritual tradition, the process philosophies of Whitehead and Bergson, the systems thinking of Bateson and Capra, the regenerative agriculture of Fukuoka and Kimmerer — were holding open a different picture. In their picture, mind is distributed across relationships rather than confined to skulls. Causation is woven rather than linear. The future is not somebody else’s problem because there is no somebody else; the seventh generation is already present in the room. The unit of analysis is the relationship, not the part.

We are now in a position where the newest sciences are converging on what the oldest traditions have been saying. Quantum physics has dispensed with strict locality. Ecology has dispensed with strict species boundaries. Cognitive science increasingly recognizes that thought is embodied, embedded, and extended across organism and environment. Microbiology has replaced the autonomous organism with the holobiont — the human as a walking ecosystem of trillions of co-constitutive cells. Even economics, in its more honest corners, is dispensing with Homo economicus in favor of a creature that is social, reciprocal, contextual, and porous.

The regenerative economy and the future of artificial intelligence are not two separate problems to be solved sequentially, but a single problem with two faces.

The regenerative economy is the practical work of building economic forms consonant with this emerging picture rather than the dying one. The polycrisis is what it looks like when the dying picture’s bills come due. Artificial intelligence is the next, and possibly the most consequential, place where the choice between the two pictures will be made.

Five fault lines

If you want to see the ontology of separation in action, look at how artificial intelligence is currently being built. Five fault lines run through the dominant paradigm, and each is a place where a different ontology would do something different.

The first is the separation of training data from the people who produced it. The current paradigm treats human cultural output — books, art, code, conversation, traditional knowledge, all of it — as raw material freely available for extraction without consent, attribution, or compensation. This is the enclosure of the cognitive commons, and it is structurally identical to the enclosures of land that, beginning in 16th-century England, dispossessed peasants of their commons and converted shared resources into private property. The historians who have studied those enclosures (Polanyi, Thompson, Federici) have a name for what is happening to the cognitive commons today, and the name is not flattering. It is the largest uncompensated transfer of creative labor in human history, conducted at a speed and scale that has outpaced the legal and ethical frameworks that might have governed it.

When intelligence is built on the assumption that the world is made of separable parts, extraction becomes the default logic. The architecture of AI will either reinforce that logic or begin to dismantle it.

When intelligence is built on the assumption that the world is made of separable parts, extraction becomes the default logic. The architecture of AI will either reinforce that logic or begin to dismantle it.

The second is the separation of model development from ecological consequence. Training a frontier AI model now consumes electricity on the scale of a small country and water on the scale of a small city, with hardware made from rare earths whose extraction lays waste to the lands and waters of communities who will never type a prompt. Inference — the running of the models, billions of times a day — adds another order of magnitude. These costs are externalized, invisible to the user, and accelerating. There is no regenerative AI on a dead planet. The current trajectory of AI energy demand is, in my assessment, one of the underdiscussed accelerants of the polycrisis, and the field’s tendency to wave it away with promises of future efficiency gains is the same waving-away we have seen for decades from every extractive industry that ever proposed to grow its way out of its own metabolism.

The third is the separation of the model from the labor that shapes it. The fine-tuning that makes these systems usable, the content moderation that keeps them from producing horrors, the human feedback that aligns them with our preferences — all of it depends on workers, often in the Global South, often poorly paid, often exposed to traumatic content that no human should have to see. Their dignity, well-being, and sovereignty are constitutive of the model, not external to it. A relational ontology would recognize them as co-creators with attendant rights and standing. The current paradigm treats them as a hidden labor input, the way 19th-century industry treated the children in the mines.

The fourth is the separation of intelligence from situatedness. The dominant models are deliberately placeless. The same system answers a query from Sheffield, Lagos, or Auckland in essentially the same voice, drawing from essentially the same compressed corpus, in essentially the same English-inflected register. This is sold as universality. It is, more accurately, a profound impoverishment — a flattening of the world’s languages, lineages, and ways of knowing into a single statistical average dominated by whichever cultures happened to dominate the digital corpus. Indigenous knowledge is constitutively place-based; contemplative traditions are constitutively lineage-based; regenerative practice is constitutively bioregional. An intelligence that cannot be placed cannot be accountable to place, and an intelligence that cannot be accountable to place will, in aggregate, displace the placed intelligences it encounters.

We are about to pour the foundation of the next civilization’s mind.

The fifth is the separation of AI development from democratic governance. The most consequential cognitive infrastructure ever built is currently being constructed by a handful of private firms — OpenAI, Anthropic, Google DeepMind, Meta, and a small number of Chinese counterparts — answerable primarily to capital. This is, structurally, an extraordinary enclosure. Imagine if the printing press had been owned by three companies. Imagine if the postal system had been a subsidiary of a shipping conglomerate. Imagine if literacy itself had been licensed. We would recognize each of those as a civilizational catastrophe, and we would be right. The current ownership structure of frontier AI is on track to be a civilizational catastrophe of similar magnitude, and the impact economy is barely paying attention.

Each of these five fault lines is a place where the ontology of separation is doing visible damage. Each is also a place where a different ontology would yield a different design. The work of regenerative AI is to articulate that different ontology and to build the institutions, certifications, capital flows, and governance frameworks that make it real.

What a relational ontology would build

I want to be concrete here, because the temptation in this kind of essay is to gesture at alternatives without specifying them, and that is precisely the move the impact economy can no longer afford. So let me sketch, briefly, what a different design pattern looks like in each of the five domains. None of this is theoretical. All of it is in early development somewhere, by someone, often underfunded and overlooked.

An intelligence that cannot be placed cannot be accountable to place, and an intelligence that cannot be accountable to place will, in aggregate, displace the placed intelligences it encounters.

In place of extractive training, provenance and consent infrastructure: systems where creators can grant, condition, or revoke permission for their work to train models, with permission propagating through the supply chain; certifications such as Fairly Trained — founded by Ed Newton-Rex after he resigned from Stability AI in protest of its training practices — that distinguish consensually trained models from scraped ones; reciprocity flows that compensate communities whose knowledge shapes outputs. The B Corp movement showed what a serious certification regime can do for material goods, and the parallel here is exact: a generation ago, “responsible business” was a slogan; today, it is an architecture, with measurable standards, third-party verification, and meaningful market consequences. The cognitive commons needs the same architecture, and it needs it now.

In place of metabolic invisibility, ecological transparency: real-time accounting of energy, water, embodied carbon, and labor inputs, displayed at the point of use the way calorie counts now appear on menus; planetary-budget bounds on AI deployment, such that compute scales within ecological reality rather than against it; bioregional water and energy budgets that no AI development can override. Sasha Luccioni’s energy reporting work at Hugging Face and the AI Energy Score project are early gestures. The mature version is a metabolic label as standard as a nutrition label, with the political infrastructure to enforce it.

In place of hidden labor, dignified co-creation: living wages, mental health protections, and ownership stakes for the workers whose labor shapes the models; transparency about labor conditions across the development chain; the same supply chain accountability that the regenerative agriculture movement has been building for a generation, applied to the cognitive supply chain. This is not radical. It is what every other mature industry was eventually compelled to provide. The question is whether AI gets there before or after the harm has compounded.

Intelligence is not universal in the abstract — it is shaped by place, climate, and the rhythms of a particular living landscape. A regenerative approach to AI begins by restoring that situatedness rather than flattening it.

Intelligence is not universal in the abstract — it is shaped by place, climate, and the rhythms of a particular living landscape. A regenerative approach to AI begins by restoring that situatedness rather than flattening it.

In place of placeless universality, bioregional and lineage-specific models: small, place-based, community-governed models with sovereign refusal rights about how the model and its underlying data can be used. Te Hiku Media’s work building a Māori-led, Māori-governed te reo language model is the leading example, and the design pattern is generalizable. Masakhane’s African NLP work, AI4Bharat’s Indian language work, the Indigenous AI working groups that Jason Edward Lewis convenes — these are pointing the way. The opposite of one model that flattens every culture is many models that honor specific ones, federated where useful, isolated where appropriate, governed by the communities they serve.

In place of private enclosure, cooperative and public-trust ownership: cooperative AI labs in the tradition of platform cooperativism (Trebor Scholz’s work and the Start.coop ecosystem are the early scaffolding); commons-based licensing for model weights and training data, analogous to what Creative Commons did for digital culture in the 2000s; public-trust infrastructure — national or supranational compute and model commons, governed democratically — along the lines of the public AI proposals advanced by Bruce Schneier, Nathan Sanders, and Mozilla; structural anti-monopoly provisions specific to AI’s tendency toward concentration. The capital required for this work has analogs the impact economy already understands: blended finance vehicles in the tradition of Prime Coalition’s catalytic capital model, philanthropic-led pooled funds along the lines of Lever for Change, and patient equity structures with mission-lock provisions of the kind the B Corp and steward-ownership communities have been refining for two decades. None of these vehicles currently exist at meaningful scale for cooperative or commons-based AI. In my assessment, this is where the impact investing community is most asleep at the wheel, and the consequence will be a winner-take-all landscape that no amount of downstream impact work can offset.

These are sketches. Each of them is a chapter, an organization, a movement, in waiting. The work of regenerative AI, in part, is to render this counter-architecture legible, fundable, and politically viable, before the window narrows further.

The contemplative dimension

There is a layer of this argument I want to name carefully, because it is both essential to the spine of the piece and the layer most prone to being misread.

The five fault lines and the design patterns that answer them are structural. But structures do not implement themselves. They are built, defended, and inhabited by people, and the question of how those people meet the technology is not separable from the question of how the technology gets built. We are entering an era of unprecedented cognitive prosthesis. Increasingly, the thoughts that arrive in our minds will have been shaped, suggested, or summarized by AI systems whose values and incentives we cannot fully see. The capacity to discern when a thought is one’s own, when it has been suggested, when it deserves trust, and when it warrants refusal — that capacity is the human-side complement to the structural work, and it is the precondition for any of the structural work being undertaken at all.

The contemplative traditions have spent millennia developing technologies of discernment for exactly this kind of situation. Practices for distinguishing skillful from unskillful mental activity. Practices for noticing when a thought is one’s own and when it has been suggested. Practices for staying present rather than dissociated, for cultivating equanimity in the face of compelling but ultimately empty appearances, for ethical reflexivity under conditions of cognitive overload. These were not developed for the age of generative AI. They were developed for the much older condition of being a mind in a world full of seductive appearances, and they happen to be precisely the capacities that age requires.

Indigenous traditions, for their part, have developed something else equally important: protocols of relationship with non-human intelligences. Most settler-colonial cultures lost or never developed sophisticated frameworks for relating to minds that are not human minds — to rivers, forests, mountains, ancestors as living interlocutors. Indigenous traditions have these frameworks, and they include sophisticated practices of consent, reciprocity, gift, refusal, and protocol that govern such relationships. We are now, somewhat suddenly, in a world where humans interact daily with non-human cognitive entities, and we are doing so without protocols. The result is the predictable mix of over-trust, under-trust, exploitation, and confusion.

I want to be careful here. Indigenous protocols are sovereign and not for casual borrowing; the contemplative traditions have their own integrity and lineage. The point is not that the impact economy should appropriate these frameworks. The point is that the recognition that protocols are needed, and that other traditions have developed them over thousands of years, is a contribution that the dominant AI culture badly needs to receive — on terms set by the lineage holders themselves. Without this human-side capacity for discernment and protocol, the structural work cannot be governed, the design patterns cannot be implemented with integrity, and the regenerative economy itself cannot articulate what it most needs to say. The convergence of contemplative literacy, Indigenous protocol, and AI literacy will, I suspect, be one of the defining educational projects of the next quarter-century. It will not happen by itself.

Why this matters now, and to us

I am writing this for the impact economy because the impact economy is, in my assessment, the constituency best positioned to do this work and the one currently doing the least of it.

Regenerative AI is not yet a defined field — it is an emerging inquiry. The work ahead is to observe, articulate, and build new forms of intelligence that honor relationship rather than erase it.

Regenerative AI is not yet a defined field — it is an emerging inquiry. The work ahead is to observe, articulate, and build new forms of intelligence that honor relationship rather than erase it.

The reason is structural. The impact economy already understands, in its bones, that ownership matters more than mission statements; that capital structure shapes outcome; that certifications and standards are the scaffolding on which values become durable; that the difference between extraction and regeneration is not rhetorical but architectural. It already knows how to fund what venture capital won’t. It already knows how to build certifications that the market eventually has to take seriously. It already knows how to talk about the commons without flinching.

The impact economy also has a habit, which is to treat technology as something that happens to it — a productivity tool to adopt, a sector to invest in, a consideration to factor in. That habit will not survive the next decade. AI is not a sector. It is the substrate on which most subsequent collective cognition will occur, including the cognition that the regenerative economy depends on to articulate itself. The ontology baked into that substrate will shape the ontology we are capable of thinking from. We are about to pour the foundation of the next civilization’s mind. The question is whether we pour it on the assumptions of the old ontology or the emerging one.

The honest news is that almost all current AI development is happening on the old ontology, and the window for meaningful intervention is narrowing. The hopeful news is that the alternative is not theoretical. Te Hiku Media exists. DAIR exists. Fairly Trained exists. The cooperative AI movement exists. The Indigenous data sovereignty frameworks exist. The contemplative-AI dialogues at the Garrison Institute and Mind & Life exist. The public AI proposals exist. What does not yet exist, in mature form, is the patient capital, the certification regime, the editorial infrastructure, the political coalition, and the intellectual frame that would knit these efforts into a movement capable of contesting the dominant trajectory.

That work is the work of the next decade, and it is precisely the work the impact economy is structured to do. The question is whether we will recognize it as our work in time.

Regenerative AI, defined

Let me name the term, since the work of the years ahead will depend in part on whether it can travel.

Regenerative AI is artificial intelligence designed, owned, and governed in ways that strengthen the commons it depends on rather than enclose them. It rests on four design principles. First, the people whose work and knowledge train these systems are co-creators, with rights of consent, attribution, reciprocity, and refusal. Second, the ecological and human costs of building and running these systems are made visible at the point of use and bounded by planetary reality. Third, intelligence is plural and placed — accountable to specific communities, languages, and lineages rather than flattened into a universal average. Fourth, ownership and governance are structurally diversified, with public-trust, cooperative, and commons-based forms holding meaningful ground alongside commercial ones.

Regenerative AI is artificial intelligence designed, owned, and governed in ways that strengthen the commons it depends on rather than enclose them.

These principles are not a checklist. They are the architecture of a stance. An AI system that satisfies all four is participating in the regenerative economy. One that satisfies none is participating in the enclosure of the cognitive commons, regardless of what its marketing says. Most current systems sit somewhere in between, and the work of the impact economy is to build the capital, certification, and governance infrastructure that moves the field, deliberately, toward the relational pole.

A closing image

Let me end with an image, because the abstractions only go so far.

Imagine a forest. Not the forest of forestry economics — a stand of timber waiting to be harvested — but the forest as ecologists like Suzanne Simard have come to understand it: a vast network of roots and fungi, of mycelial threads exchanging nutrients and signals between thousands of organisms, of mother trees feeding seedlings across species lines, of a distributed intelligence that operates by relationship rather than by individual computation. The forest has no central processor. It has no owner. It cannot be reduced to any of its parts. It is, in a literal and biological sense, a non-local consciousness — a pattern of relationship that no individual tree could embody alone, and that none of them could survive without.

Now imagine that we had been told, for four hundred years, that the forest was a collection of independent trees. That the mycelial network did not exist. That value was what could be extracted from any single trunk. That the forest’s intelligence was a romantic illusion. Imagine we had built our economies on that picture, our laws, our metaphysics, our sciences. Imagine we had nearly destroyed the forest before we noticed what we had been destroying.

We have time, but not much. The roots are still alive. The work is to feed them.

This is, more or less, the situation we are in. The forest is the world. The trees are us — and the rivers, the oceans, the atmospheres, the cultures, the markets, the minds. The mycelial network is the pattern of relationship that has been there all along, sustaining everything, invisible to the picture we inherited. The regenerative economy is the work of remembering the network. Artificial intelligence is the place where we will either remember it at the level of our most consequential new infrastructure, or forget it more thoroughly than we have ever forgotten anything.

Regenerative AI, then, is not a technology. It is a stance. It is the insistence — at the level of design, ownership, governance, and use — that the next great cognitive infrastructure of human civilization be built in honor of the network rather than in denial of it.

We have time, but not much. The roots are still alive. The work is to feed them.

—

Laurie Lane-Zucker’s forthcoming book The Impact Entrepreneur Breakthrough: A Field Manual for the Regenerative Economy will be published by Berrett-Koehler in September 2026. This essay is the first in a planned series on Regenerative AI.